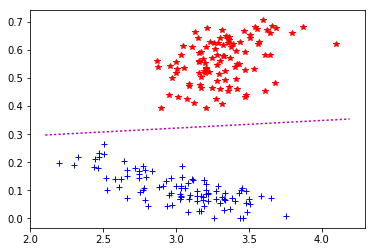

After fitting the data using the gridSearchCV I get a classification score of about. But as as long as the previous solution $H^*$ remains feasible, the solution of the optimization problem does not change.I'm currently using svc to separate two classes of data (the features below are named data and the labels are condition). Again, this amounts to adding constraints to the optimization problem and contracting its feasible set. In addition, one can add as many points as one wish to the data set without affecting $H^*$, as long as these points are well classified by $H^*$ and outside the margin. To see this, note that removing another point from the data set amounts to removing a non-active constraint from the quadratic program above. These points (if considered with their labels) provide sufficient information to compute the optimal separating hyperplane. The support vectors are the data points $\g x_i$ that lie exactly on the margin boundary and thus that satisfy Īt this point, we formulated the determination of the optimal separating hyperplane as an optimization problem known as a convex quadratic program for which efficient solvers exist. Given a linearly separable data set $\ b) \geq 1, \ i=1,\dots,N.

You can click inside the plot to add points and see how the hyperplane changes (use the mouse wheel to change the label). The optimal separating hyperplane parameters : The support vectors are the highlighted points lying on the margin boundary. The plot below shows the optimal separating hyperplane and its margin for a data set in 2 dimensions. Elaborating on this fact, one can actually add points to the data set without influencing the hyperplane, as long as these points lie outside of the margin. These points support the hyperplane in the sense that they contain all the required information to compute the hyperplane: removing other points does not change the optimal separating hyperplane. In particular, it gives rise to the so-called support vectors which are the data points lying on the margin boundary of the hyperplane. The optimal separating hyperplane is one of the core ideas behind the support vector machines. The optimal separating hyperplane should not be confused with the optimal classifier known as the Bayes classifier: the Bayes classifier is the best classifier for a given problem, independently of the available data but unattainable in practice, whereas the optimal separating hyperplane is only the best linear classifier one can produce given a particular data set. As a consequence, the larger the margin is, the less likely the points are to fall on the wrong side of the hyperplane.įinding the optimal separating hyperplane can be formulated as a convex quadratic programming problem, which can be solved with well-known techniques. Thus, if the separating hyperplane is far away from the data points, previously unseen test points will most likely fall far away from the hyperplane or in the margin.

New test points are drawn according to the same distribution as the training data. The idea behind the optimality of this classifier can be illustrated as follows. In this respect, it is said to be the hyperplane that maximizes the margin, defined as the distance from the hyperplane to the closest data point. In a binary classification problem, given a linearly separable data set, the optimal separating hyperplane is the one that correctly classifies all the data while being farthest away from the data points. The optimal separating hyperplane and the margin In words.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed